It will grow up to the maximum specified, which is 20G (for the Kitematic docker-machine default). I did try to work around this with docker-machine creating the box for me before launching kitematic, with mixed sucess. I needed to use the docker-machine that was bundled with KiteMatic (as opposed to the one I have installed separate to kitematic) to create the machine manually. After this Kitematic was able to use that virtual machine # create an alias to kitematics docker-machine alias dm='/Applications/Kitematic (Beta).app/Contents/Resources/resources/docker-machine' # create the dev docker machine with a 40G (dynamically grown) disk dm create -d virtualbox -virtualbox-disk-size '40000' dev.

I have a set-up for a course on databases and data management that runs an extended scipystack plus mongodb and postgresql, as well as an openrefine container (some ). One of the activities uses quite a large dataset (a national road accident dataset and a whole bund of shapefiles) spread across a sharded mongo db. (Finding effective ways of managing prepopulated databases and configurations that can be distributed efficiently to students is another issue, eg see in this context also. A second issue is the ability to easily create and run docker-compose configs from within the UI environment. I was going to blog some thoughts on that when I get a chance.). Re: mapping the volumes - I seem to recall I had a problem with that on a Mac? (I'm right at the limit of my skills/knowledge with all this - an optimistic technologist, rather a sysadmin/devops person!;-) Also - how would that work for a distribution?

If I have a large database, I don't really want students to have import the data, index it etc? The route I am taking is to build a virtualbox box with prebuilt images, and some prebuilt data volumes. Students then use vagrant and vagrant-docker-compose to fire up the containers and link directly to the prebuilt volumes. I guess an alternative distribution route would be for me to build the databases, seed them, export a backup, distribute the backup and then let students fire up db containers, create new linked/mapped volumes and then restore the data from the backup (eg on a USB stick) into a mapped data volume? I haven't tried that route yet. (things not helped by the fact that I'm not a db admin either so don't know how to do that basis dbadmin stuff anyway, let alone in a container context!;-) Being able to make use of docker-compose in kitematic context would be really helpful, I think.

I'd quite like a GUI option so I could create a new composition, drag images from the Kitematic sidebar into it to specify a container of that image type, name the container on that canvas, and then use a graphical tool to wire them together:-) I'd also want to be able to open a panel that lets me specify properties etc of each container. In addition, split the left hand sidebar into available images and available compositions. I tried that but couldn't seem to mount database directories onto host though (on a mac; building the machine via vagrant-docker-compose). This is odd, though, because I can mount the host notebook folder for my IPython notebook server container and also a host directory for an OpenRefine server container's project files. Just checked the Dockerfile again and it seems I ran the successfully mounted container as privileged.

Setting the db containers as privileged won't let me mount them against host though, nor can I get linked data containers to mount against host? That's the Goal of kitematic - Although going over 20GB in containers/images I feel isn't the typical setup. Out of curiosity, how many containers/images do you have? I blitzed through 20GB in the first day of using Kitematic.

The openstreetmap-carto repository needs to be a directory that is shared between your host system and the Docker virtual machine. Home directories are shared by default; if your repository is in another place, you need to add this to the Docker sharing list. Sufficient disk space of several gigabytes is generally needed. I am trying to understand how I can increase the available space docker offers to the containers. TL;DR - How can I attribute more hard disk space to docker containers? My core system: docker info Stack Exchange Network. Docker increase available disk space. Ask Question 0.

My dev environment re-builds huge caches (about 3GB each), and each new build is versioned. It's not uncommon for my team to go through several versions a day. That's on top of the 10-15GB of extra tooling we bring in through 5-7 different Dockerfiles via Docker Compose. We delete all active containers and kill any images, but the artefacts left behind by switching versions all the time easily pushes us over this number.

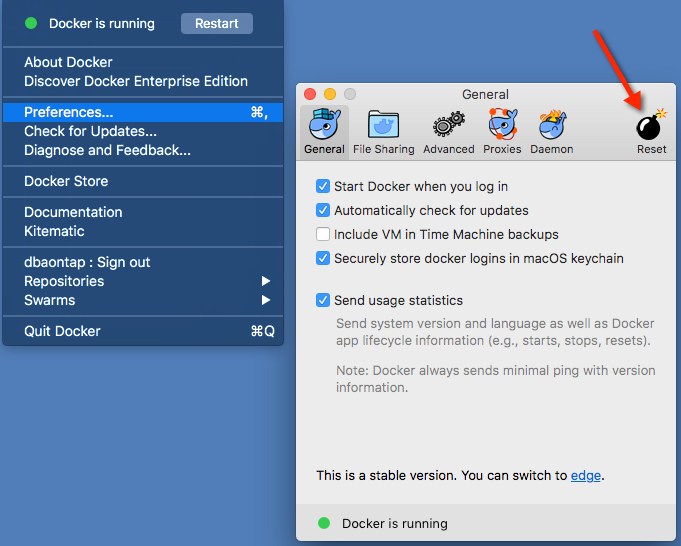

SSH'ing into the box and manually culling is arduous and doesn't always prevent the 'no space left on device' warnings. Applying a 20GB limit is too opinionated and arbitrary and clearly doesn't work for all users, hence this issue. I understand this is VirtualBox's problem, but since Kitematic is the front-facing entry point to OS X Docker (and docker-machine in turn), it'd be nice if one of the Docker tools took ownership of the underlying boot2docker set-up / machine resizing with a simpler GUI, or at least some kind of CLI flag to abstract it. I think it'd go a long way to support Docker's goal of making Docker Toolbox a friendly front-end. Sorry if I'm airing opinions in the wrong place. It's just because Kitematic is the first layer we use.